In this post, I want to briefly describe three types of caches: write-through, write-back, and write-around. The cache is semiconductor memory where data is placed temporarily to reduce the time required to service I/O requests from the host. Write operations with cache provide performance advantages over writing directly to disks. A write operation with cache is implemented in three ways which have its advantages and disadvantages.

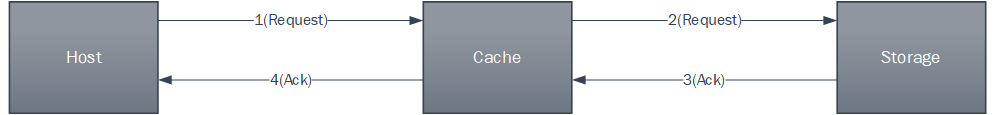

Write-back cache: Data is placed in cache and an acknowledgment is sent to the host immediately. Later, data from several writes are committed to the disk. Write response times are much faster because the write operations are isolated from disk operations. but there is a risk of data loss if cache failures occur.

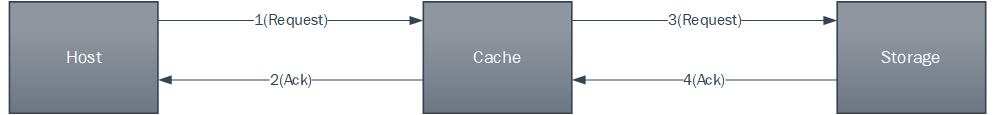

Write-through cache: Data is placed in the cache and immediately written to the disk, and after an acknowledgment is received by the cache, an acknowledgment is sent to the host. while risks of data loss are low, Write-response time is longer than write-back du to disk mechanical operations.

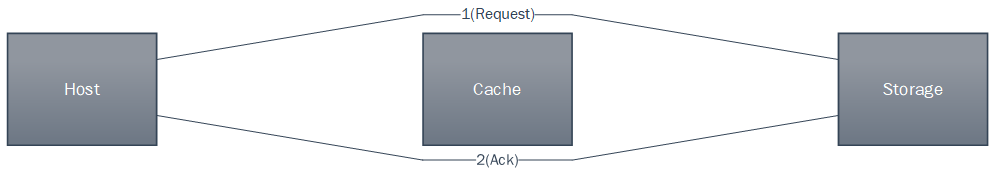

Write-around cache

The cache can be bypassed under certain conditions, such as large size write I/O. In this case, writes are sent to the disk directly to reduce the impact of large writes consuming a large cache space. This is particularly useful in an environment where cache resources are constrained and the cache is required for small random I/Os.